The Most Human Problem in Engineering

When we speak, our brain sends precise electrical signals to over a dozen facial and neck muscles — the masseter, the digastric, the risorius, the zygomaticus major. Even when no sound comes out, those signals still fire. That is the fundamental insight behind Silent Speech Interface research, and it is the foundation of TrueVoice.

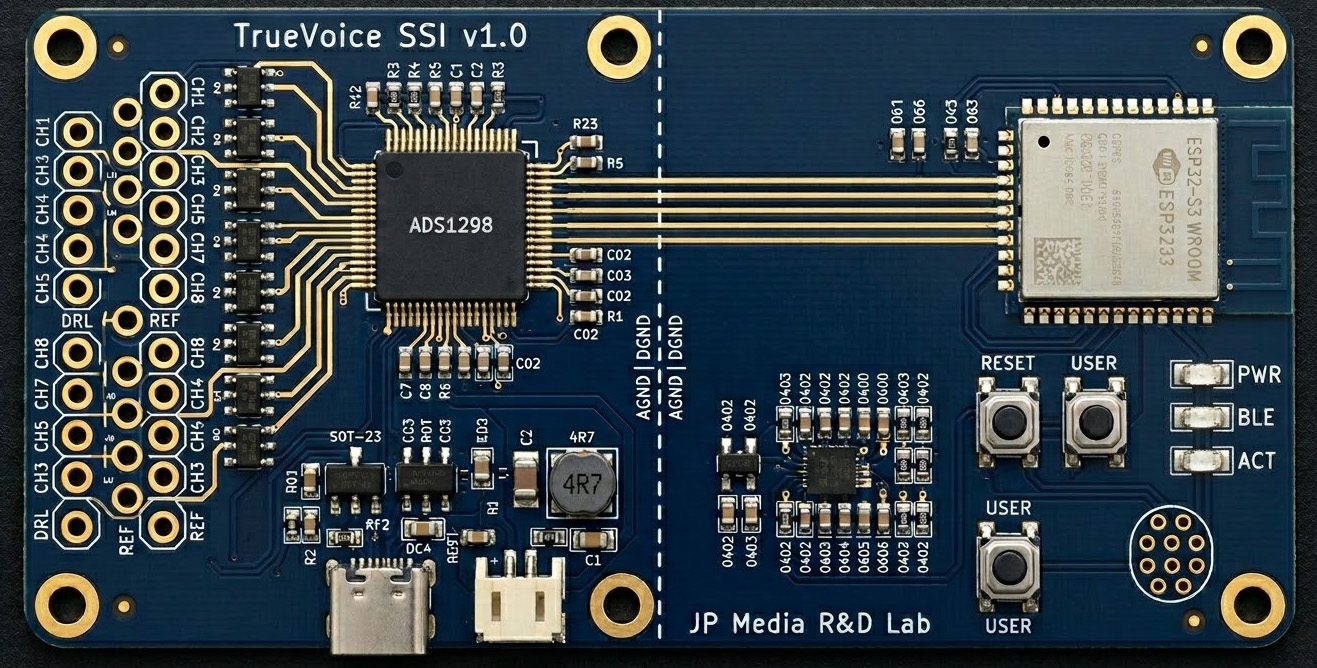

Surface electromyography (sEMG) can measure these micro-electrical patterns at the skin surface. With enough channels, simultaneous sampling, and modern AI, those patterns can be decoded into the phonemes and words a person intended to say — and then spoken aloud in that person's own cloned voice.

This is not science fiction. It is an active frontier of biomedical research, and it is what TrueVoice is building toward.

Concept Vision

Eight electrode patches on the jaw, cheek, and neck. The main unit clips to the collar. The phone speaks. No throat buzzer — just the person's own voice, restored.

Not a Founder Story

My life partner was 21 when throat cancer took her voice. What replaced it was a plastic device that buzzed when pressed to the neck. It let her be understood. It did not let her be heard — not really, not as herself.

She passed away in 2005. I'm not sharing this to tell a compelling founder story. I'm sharing it because it's the reason this project exists, and because she deserves to be the reason — not a footnote in a pitch deck.

Twenty-one years later, the technology finally exists to do this properly. TrueVoice is not a product looking for a market. It's a long-overdue answer to a problem I watched someone I loved face alone.

— Founder, JP Media R&D Lab · Nova Scotia, Canada